Usual placement of Leap Motion

in front of laptop.

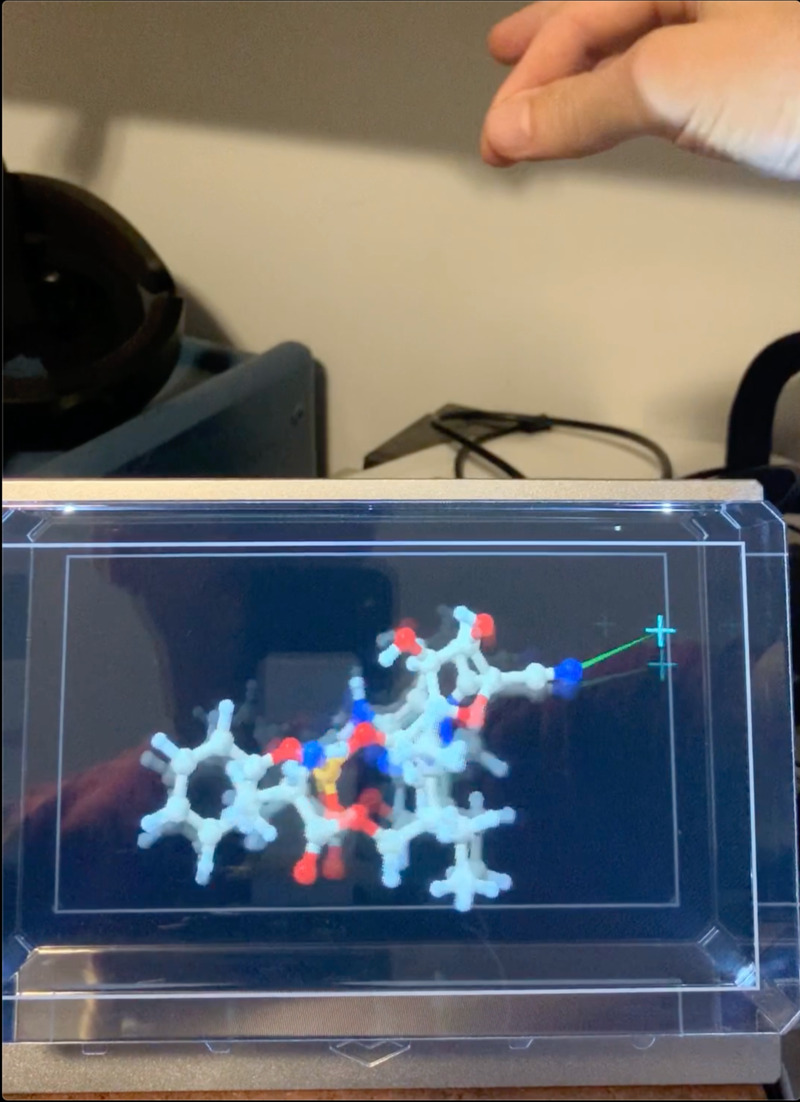

Video with Leap Motion behind

LookingGlass 3D display.

Tom Goddard

July 21, 2020

The tracking quality of the Leap Motion hand tracking device is far too poor for anything beyond a demonstation. But under ideal conditions, using it reveals problems with the whole idea of hand tracking as a computer user interface.

Here are some details of how to use the Leap Motion device in molecular visualization program ChimeraX. This is only useful as a technology demonstration and for investigating how a better tracking device might be used.

Usual placement of Leap Motion in front of laptop. |

Video with Leap Motion behind LookingGlass 3D display. |

The leapmotion command is available as a plugin to ChimeraX 1.0 and can be installed using Chimerax menu entry Tools / More Tools.... It is only available on Windows since that is the only operating system the LeapC SDK supports. Start it with ChimeraX command

leap

It displays a crosshair for each hand (yellow for left hand, green for right hand). Pinching with the left hand, i.e. touch index finger to thumb, emulates a mouse click of the left mouse button. Pinching with the right hand emulates a 3D click equivalent to a virtual reality button press using the mouse mode assigned to the right mouse button. For a 3D click on an atom the crosshair should be placed in front of the atom. Pinching changes the crosshair color to cyan (left hand) or magenta (right hand). The crosshairs track the hand palm position. The device tracks individual finger joints, fingers, hand, wrist and forearm orientation. But the tracking is so erratic only the hand palm position is used. The orientation of the hand is not used.

This video shows rotating with the left hand and tugging an atom with the right hand. The leap motion was placed behind the display to avoid blocking the view of the very small LookingGlass display.

Hand tracking has a lot of drawbacks and a few advantages even when tracking is perfect. Using the Leap Motion under ideal conditions makes these fundamental drawbacks apparent. It is hard to imagine viable uses of even an ideal hand tracking device.

3d position and rotation. Compared to a mouse, hand tracking allows 3d positioning and also 3d rotation, so 6 degrees of freedom versus just 2 for a mouse. Another example of a 6 degree of freedom input device is the SpaceMouse.

Museum exhibits. In a hands-on science museum such as the Exploratorium in San Francisco no exhibits use a computer mouse as it is too easily damaged or stolen. A hand tracker behind glass can allow a person to interact with a computer. A touch screen is another way to do this.

Hard to initiate actions. Buttons are a basic mechanism of most input devices to precisely begin and end a continuous motion such as a mouse drag. Hand tracking has no good equivalent, making it very hard to use for anything. ChimeraX uses a pinch hand gesture (thumb and index finger touch each other) but this gesture moves the hand making it hard to precisely position at the beginning and end of a motion.

Poor spatial precision. A mouse allows very high spatial precision, easily clicking one atom among 1000 on a screen with millimeter precision. With hand tracking it is challenging to choose an atom with centimeter precision, 10 times worse. It is hard to hold the hand suspended in air still while a mouse rests on a surface. Performing a pinch gesture with hand held still adds more challenge. A touch screen such as a cell phone also has poorer precision than a mouse but is much better than hand tracking because the finger rests on the screen and exactly aligns with the object on the screen. With hand tracking the hand is far from the object being picked with the alignment achieved by watching a pointer on the screen.

Poor interoperation with mouse and keyboard. Alternative computer input devices usually require taking hands away from mouse and keyboard. This makes it inefficient to keep switching between mouse and hand tracking when hand tracking can only do limited tasks. For instance, switching between hand tracking to rotate a molecule to clicking user interface panels and menus with a mouse is much more laborious then just rotating with the mouse and operating panels and menus with mouse.

No sense of touch. We use our hands for everything in daily life, but almost all uses are based on touch feedback. We easily pick up a pen or glass by using touch. Doing this with hand tracking in virtual reality works poorly (clumsy, mentally taxing) because there is no touch feedback. A use of hands where we don't rely on touch is for gesturing when communicating, a use where precision requirements are low.

Occlusion. Hand tracking without putting any apparatus on the hands naturally uses video to see the hands. But the hands are easily blocked. One hand blocks the view of the other, one finger blocks the view of other fingers. The Leap Motion has two closesly spaced views which often suffer from occlusion. A more reliable tracking would require views from completely different directions. VR tracking of hand controllers with two lighthouse base stations (sweeping IR laser) in opposing corners of a room are an example of how to avoid occlusion.

Augmented and virtual reality. In augmented or virtual reality, simply touching, grabbing or pointing at a virtual object could trigger an action, and hand tracking could achieve this. This mechanism seems far less capable than existing VR hand controllers which have several buttons, thumbsticks and analog controls. For example, I may want to grab and rotate a molecule, or I may want to rotate a bond, tug on or delete an atom, the different tasks being triggered by different VR hand controller buttons. Virtual buttons could do that with hand tracking but pressing virtual buttons (with no sense of touch) while making a precise hand motion is more difficult.

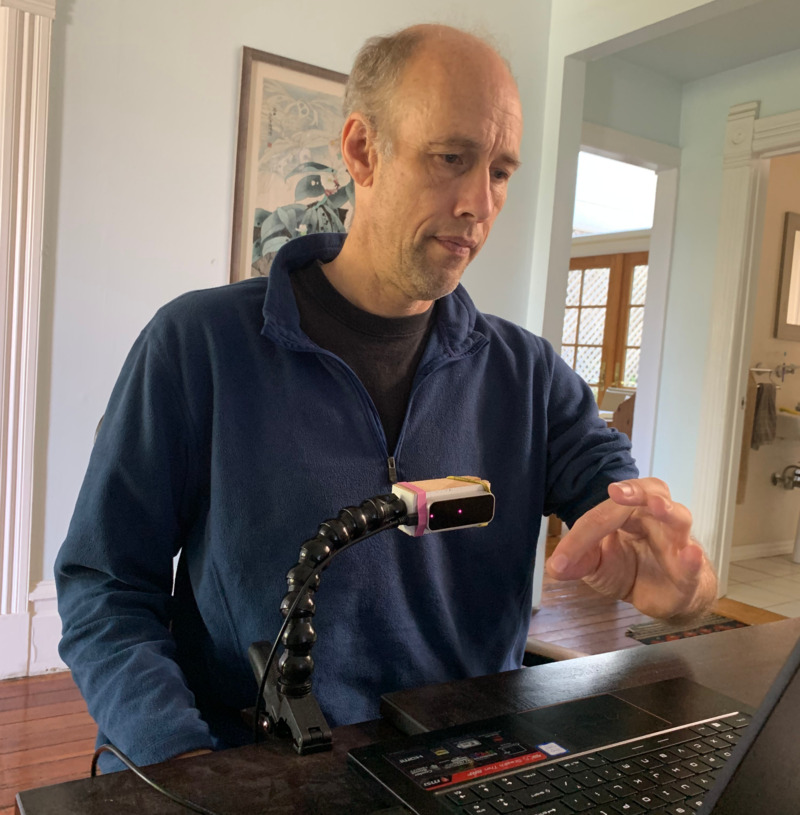

Leap Motion facing forward for more reliable tracking. |

The Orion Beta 4 SDK is reported to track hands more reliably with the device facing forward (usually VR head mounting) than facing up (desktop mode). It is optimized for VR and uses that the hands are mostly viewed from the top to improve reliability of tracking. The ChimeraX leapmotion command has a "headMount true" option to enable forward facing mode.

It is difficult to place the device forward facing for non-VR use. A good position is at about chest level facing forward but I didn't find any unobtrusive way of mounting it there.

Aside from poorer tracking when Leap Motion is facing up in front of the computer keyboard, it is also has poor ergonomics, requiring lifting hands 20 cm above the keyboard so they are far enough from the device. When using a laptop this causes hands to block the view of the screen.

I tried facing the device from left or right side, seemingly the most practical placement, but the tracking was unusable, often unable to recognize hands.

The device is rendered unusable by many types of tracking noise.

Getting all the conditions right for good tracking is possible but too difficult for any routine use.

I tried the Leap Motion in 2013 in Chimera adding the leap command. Remarkably that still works in the latest Chimera 1.14 from 2019 (tested on Windows). I also tried it in an early 2013 version of ChimeraX in this video. No newer version the Leap Motion hardware was released after 2013. Leap Motion was sold to UltraHaptics in 2019, which renamed itself Ultraleap. It is currently (2020) sold only by a handful of robotics and electronics sites for $90 USD.